AI COMPUTE

Purpose built AI compute for AI builders

An ideal supplement to your existing hyperscalers, Zai Node provides HPC GPUs, turnkey orchestration and advanced models in an integrated platform for developing and running cutting-edge AI.

OUR COMPUTE OFFERINGS

ON PREM

Buy or rent our HPC servers, at your premises for complete control and data protection. With NVIDIA RTX Pro 6000 Blackwell GPUs or custom.

CLOUD

Hosted in your cloud or Zai Node’s data centres in Australia and Germany. Fully managed stack that delivers access to cutting edge NVIDIA GPUs.

EDGE DEVICES

Proprietary, purpose-built hardware and sensors with DGX Spark, enabling real-time data processing, ML, analysis with no cloud, offline.

DESKTOP WORKSTATION

HPC workstation With NVIDIA RTX 6000 Blackwell graphics card for local dev to train, fine-tune and run complex models and large datasets.

Why have your own AI compute?

Why Zai Node AI compute?

-

Local control

Keep data in your Australian or European regions.

-

Flexible design, easy upgrades

Choose bare metal, managed clusters or APIs and flex anytime in 24 hours, not months.

-

Scalable results, better ROI

Zai Node helps you scale AI while reducing cost. Connect models, agents, data and work under one lineage system.

-

Portability options

Deploy seamlessly across cloud and/or on-prem; dedicated environments for security, compliance, or performance; or self-hosted for full control.

-

Fast access, zero config

Achieve peace of mind when standing up AI infra with NVIDIA, app frameworks, dev tools and microservices optimised to run on Zai Node systems.

-

Expert support & warranty

Hands-on support from dedicated engineers for any infra, OS or ML issues.

ON PREM

No compromise data protection, with our on-prem fully air-gapped HPC servers, you get the performance of the cloud with the security of your own premises. Your data, your servers, your control. Run serious workloads, train models, process data, build inference endpoints.

Buy or rent

Flexible ownership to suit your CapEx or OpEx needs.

Latest tech

Equipped with NVIDIA RTX Pro 6000 Blackwell GPUs.

Custom build options

Tailored to your workloads and budgets. Choose CPU, GPU, RAM and storage.

SERVER SPECIFICATIONS

GPU — 8x NVIDIA RTX 6000 PRO Blackwell Server Edition 96GB Graphics Card (768 VRAM), TFLOPS 1008

CPU — 2x Intel XEON 6767P 64C/128T 2.40/3.90GHz CPU; or 2x AMD EPYC 9254 (24-core, 128MB cache)

RAM — 1024GB 6400Mhz DDR5 ECC RAM; or 384GB DDR5 ECC RDIMM

OS — Ubuntu Server 22.04 LTS

Storage — 1.92TB Micron 7450 PCIe 4.0 NVMe SSD; or 2x 1TB PCIe 4.0 NVMe M.2 SSD Boot Drive

Network — Dual 10GbE ports; or 2x 25G Network Adapter

16TB PCIe 5.0 NVMe SSD Data Drive

Chassis — ASUS ESC8000A-E12P; or NVIDIA MGX 4U

Dimensions — Width: 9.5" (240mm), Height: 22.8" (580mm), Depth: 20" (560mm), Weight: 43-55 lbs

Other — N + N Redundancy; Static IP

CLOUD / DATA CENTRES

Zai Node’s servers hosted in your data centre or ours in Australia and Germany. Fully managed stack that delivers access to cutting edge NVIDIA GPUs.

Buy or rent

Flexible ownership to suit your CapEx or OpEx needs.

Tier 1 data centres

Our IRAP certified DC, dedicated private environment to maintain strict governance and compliance, with VPN, fast internet, and direct connects.

Managed infrastructure

Access to fully orchestrated GPU clusters with native integrations into existing DevOps workflows.

OUR DC AT EQUINIX ME2 IN MELBOURNE OUR HPC SERVERS, ON PREMOUR SECURE AI COMPUTE RACKSPACE EDGE DEVICES

Real-time data processing at the rugged edge

Our proprietary edge hardware and sensors are purpose built, enabling real-time data processing, machine learning, and analysis without the cloud or internet.

CHALLENGES ZAI NODE SOLVES

Modern IOT and smart devices generate enormous volumes of sensor, video, CAN and telemetry data, but the data is:

Passive data

Collected passively and analysed later.

Needs high bandwith

Dependent on high‑bandwidth connectivity, online only.

Poor hardware

Processed on general‑purpose hardware not designed for harsh insitu conditions.

ZAI NODE’S KEY CAPABILITIES

Our solutions combine ruggedised AI hardware, industry‑grade interfaces, and embedded intelligence.

Real time

Inference at the edge immediately detects anomalies, failures and performance deviations.

On device

Reducing and prioritising data with only the most valuable data logged or transmitted.

Integrated

Native support for related networks, sensors and workflows.

Private

Processing sensitive data directly on the device, complete privacy.

Offline

Offline and low‑latency operation with data available and processed without connectivity.

IDEAL FOR MANUFACTURERS AND OPERATORS

Zai Node supports asset owners and operators, to modernise existing sensor and device fleets. We also work with sensor manufacturers to train, manage, deploy and embed AI/ML and software alongside your sensors.

Zai Node’s edge AI in production

DESKTOP WORKSTATIONS

No compromise data protection, with Zai Node’s desktop HPCs. Your data, your personal server, your control. Run serious workloads, train models, process data, build inference endpoints.

Buy or rent

Flexible ownership to suit your CapEx or OpEx needs.

Latest tech

Equipped with NVIDIA RTX Pro 6000 Blackwell GPUs.

Custom options

Tailored to your work and budget. Choose CPU, GPU, RAM and storage.

DESKTOP SPECIFICATIONS

GPU — 4x NVIDIA RTX 6000 PRO Blackwell Max-Q 96GB Graphics Card (384 VRAM) 300W per GPU

CPU — AMD Ryzen Threadripper PRO 7975WX (liquid cooled with Silverstone XE360-TR5); 32 cores / 64 threads; Base clock: 4.0 GHz, Boost up to 5.3 GHz; 8-channel DDR5 memory controller

RAM — 256GB DDR5 RAM; 8 channels

OS — Ubuntu Server

Storage — 8TB total: 4x 2TB PCIe 5.0 NVMe SSDs x4 lanes each (up to 14,900 MB/s theoretical for each NVMe module)

Network — Dual 10GbE ports; or 2x 25G Network Adapter

16TB PCIe 5.0 NVMe SSD Data Drive

Chassis — ATX

Dimensions — Width: 9.5" (240mm), Height: 12" (304mm), Depth: 20" (560mm), Weight: 43-55 lbs

Other — N + N Redundancy; Static IP

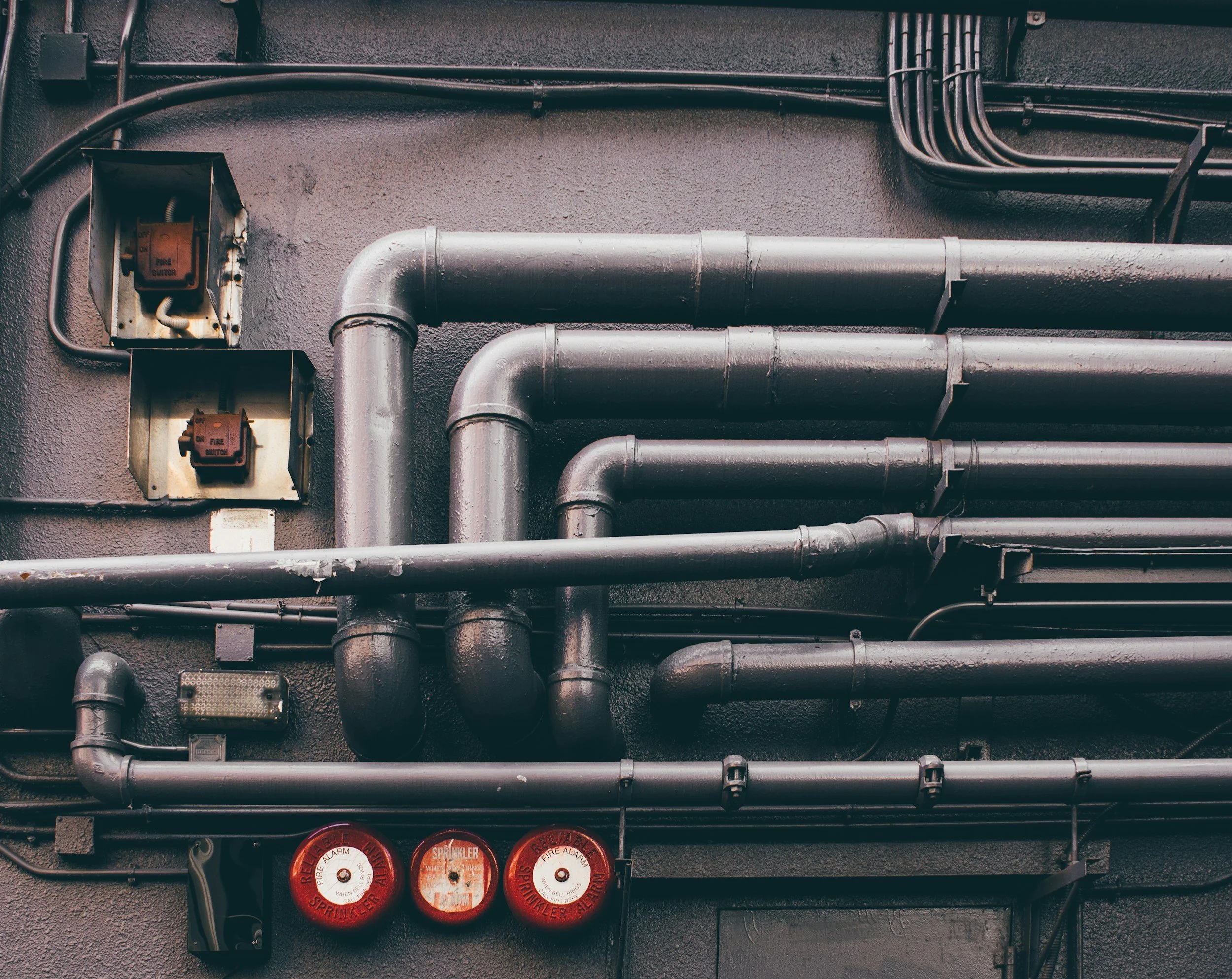

Key attributes of Zai Node’s compute

-

Power use

Our AI servers require extreme rack densities (30–60 kW per rack). Scaling this demands advanced electrical design, intelligent distribution and redundant power systems. Our infra, chips, models and software are carefully designed to minimise energy use and maximise value per watt utilised.

-

Cooling systems

Our closed cooling loops eliminate evaporation, drastically reducing municipal water demand. Our system design prioritises drought resilience and minimal draw from community resources. We have built a novel prototype immersion cooling GPU cluster, we believe an Australian first with significant energy efficiency benefits.

-

Networking

Our segregated high-performance network includes hardware management, hypervisor and cluster management, inference serving networks, and training networks with extreme east–west bandwidth (400–800 Gbps per node), enabling frontier LLM training. Redundancy includes multi-path fibre and automatic failover provide resilience and continuous availability.

-

Compute & storage

Dense GPU servers with NVLink and PCIe Gen5 architectures, optimised for AI training and inference. CPU compute includes high-core-count systems for orchestration, preprocessing and hybrid applications. Storage with hot NVMe-based distributed storage for active training datasets; and cool, erasure-coded object storage for archival and compliance requirements.

-

Simple & fast

We can spin up and spin down GPU compute with 24 hours notice. We also engineer controls to prevent unexpected run times, when users forget to pause compute.

-

Quick cross connects

As we’re colocated in major DCs, cross connects are fast and secure. Ideal for connecting your AI workloads to other devices, networks or DCs, all while avoiding the public internet. Connect to e.g. Anthropic, AWS Bedrock, AWS, Bard AI, OpenAI, Azure, Kubernetes Engine … all within minutes.

-

Hardware Manifest & Attestation

For each server or workstation, Zai Node provides fully certified Hardware Manifest and Attestation documentation, ensuring security protocols are met.

-

Third party inspections

Unlike hyperscalers, Zai Node makes our infra open for physical and digital third party inspections and verifications.

-

Certificate of Origin

Our servers and workstations are accompanied by a Certificate of Australian Origin.